Table of Contents

- 1. Overview

- 2. Part 1

- 3. Hibari Overview

- 4. Hibari - Distinctive Features

- 5. Hibari - Engineered in Erlang

- 6. Hibari - Chain Replication

- 7. Hibari - Automatic Failover

- 8. Hibari - Load Balancing

- 9. Hibari - Node Failure

- 10. Hibari - Cluster

- 11. Hibari - Load Balancing (again)

- 12. Hibari - Admin Server(s)

- 13. Hibari - Chains

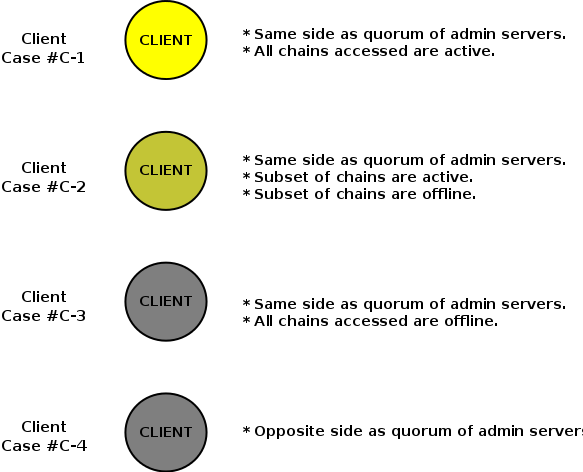

- 14. Hibari - Clients

- 15. Hibari - 3 Layers

- 16. Hibari - Consistent Hashing

- 17. Hibari - Chain Migration

- 18. Hibari - Chain Migration (cont.)

- 19. Hibari - Write-Ahead-Logs

- 20. Hibari - Client API

- 21. Hibari - Client API (cont.)

- 22. Part 2

- 23. Erlang - Basic Types

- 24. Erlang - Compound Types

- 25. Erlang - Miscellaneous

- 26. Erlang - Factorial Program

- 27. Erlang - Sequential Programs

- 28. Native Client - Single Ops

- 29. Native Client - Multiple Ops

- 30. Native Client - Common Args

- 31. Native Client - Common Args (cont.)

- 32. Native Client - Common Args (cont.)

- 33. Native Client - Common Errs

- 34. Native Client - Get Flags

- 35. Native Client - Get Rets

- 36. Native Client - GetMany Flags

- 37. Native Client - GetMany Rets

- 38. Native Client - Do Rets

- 39. Part 3

- 40. Hibari - Single Node Install

- 41. Hibari - Single Node Start, Bootstrap, and Stop

- 42. Hibari - Verifying Status

- 43. Hibari - Remote Shell

- 44. Hibari - Creating New Tables

- 45. Hands On Exercises

- 46. Hands On Exercises #1-A

- 47. Hands On Exercises #1-B

- 48. Hands On Exercises #1-C

- 49. Hands On Exercises #2

- 50. Hands On Exercises #2 - Hints

- 51. Hands On Exercises #3

- 52. Hands On Exercises #3 - Hints

- 53. Hands On Exercises #4 - Advanced

- 54. Hands On Exercises #4 - Hints

- 55. Thank You

- Part 1

- Hibari Overview

- Part 2

- Erlang Basics

- Native Client

- UBF Basics (extra)

- UBF Client (extra)

- Part 3

- Hibari Basics

- Hands On Exercises

- Hibari is a production-ready, distributed, key-value, big data store.

- Hibari combines Chain Replication and Erlang to build a robust, high-performance distributed storage solution.

- Hibari delivers high throughput and availability without scarificing data consistency.

- Hibari is open source and built for the carrier-class telecom sector and proven in multi-million user telecom production environments.

- High Performance, Especially for Reads and Large Values

- Per-table options for RAM+disk-based or disk-only value storage (#)

- Support for per-key expiration times and per-key custom meta-data

- Support for multi-key atomic transactions, within range limits

- A key timestamping mechanism that facilitates "test-and-set" type operations

- Automatic data rebalancing as the system scales

- Support for live code upgrades

- Multiple client API implementations

# per-key options have been implemented but not yet deployed in a production setting.

- Hibari’s server is written entirely in Erlang. Hibari clients may be written in Erlang and/or other programming languages.

- Erlang is a general purpose programming language and runtime environment designed specifically to support reliable, high-performance distributed systems.

Erlang’s Key Benefits are

- Concurrency

- Distribution

- Robustness

- Portability

- Hot code upgrades

- Predictable Garbage collection behavior

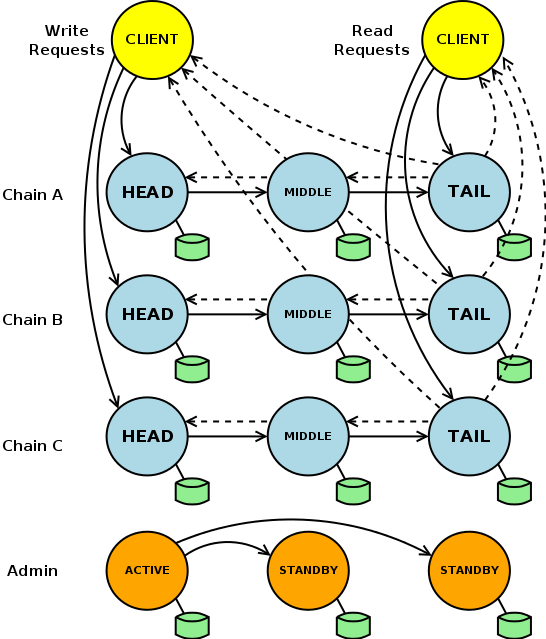

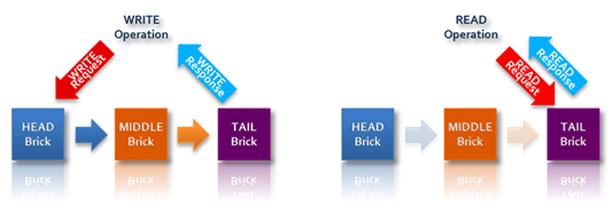

- Chain Replication is a technique that achieves redundancy and high availability without sacrificing data consistency.

- Write requests are directed to "head", to "middle", and to "tail" bricks.

- Read requests are directed to the "tail" brick.

- The length of a chain is configurable and decides the degree of replication.

- Through consistent hashing, the key space is divided across multiple storage "chains".

- The entire key or a prefix of the key can be the subject of consistent hashing.

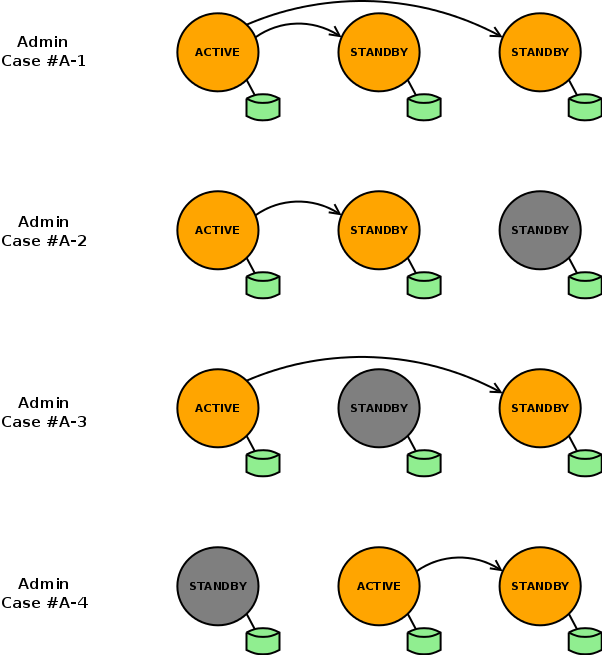

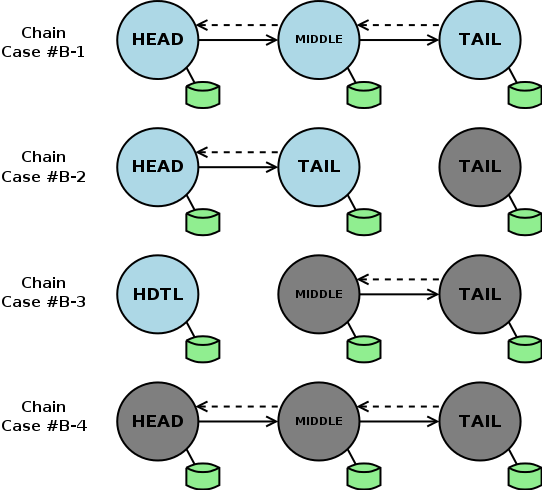

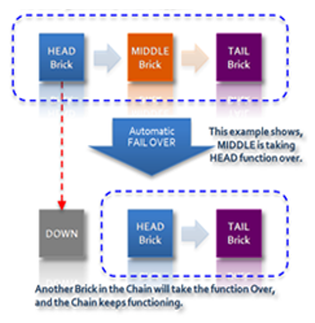

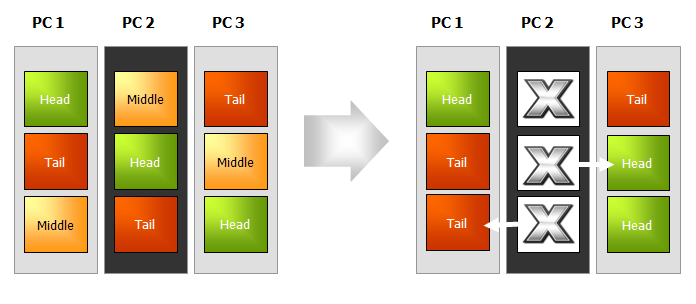

- Hibari detects failures within a chain and automatically adjusts member brick roles.

- If the head brick goes down, the middle brick automatically takes over the head brick role.

- If the new head brick failed also, the long remaining brick would play both the head and the tail role.

- By following the properties of chain replication, Hibari can guarantee strong consistency even in the event of brick failures.

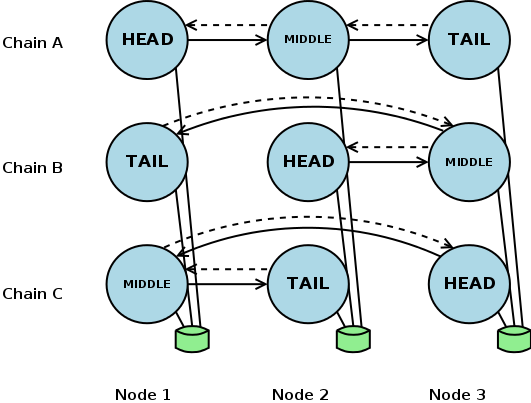

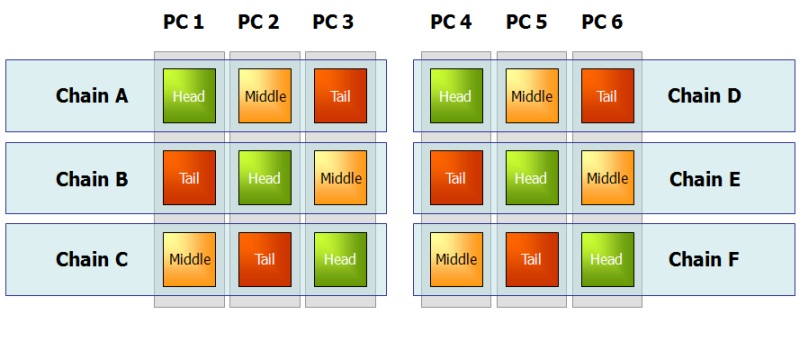

- Head bricks and tail bricks bear more load than do middle bricks.

- Load balancing of roles and of chains across physical machines can better utilize hardware resources.

In the event of physical node failure, bricks automatically shift roles and each chain continues to provide service to clients.

- Chain Repair Process

- Failed node is restarted.

- Failed bricks are restarted and moved to the end of the chain.

- Failed bricks repair themselves against the "official tail".

- Repaired bricks are moved to their original position and then resume normal service.

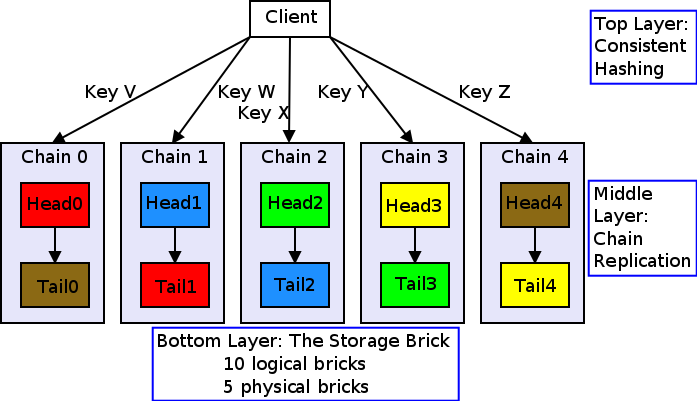

- Top layer

- consistent hashing

- Middle layer

- chain replication

- Bottom layer

- the storage brick

- Hibari clients use the algorithm to calculate which chain must handle operations for a key.

- Clients obtain this information via updates from the Hibari Admin Server.

- These updates allow the client to send its request directly to the correct server in most use cases. Servers use the algorithm to verify that the client’s calculation was correct.

- If a client sends an operation to the wrong brick, the brick will forward the operation to the correct brick.

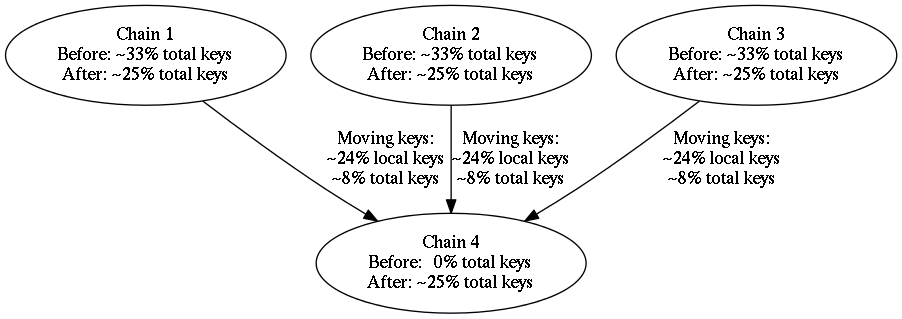

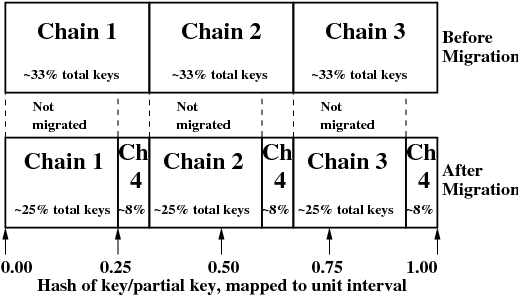

Motivations for rebalancing of data:

- Chains are added or removed from the cluster.

- Brick hardware is changed, e.g. adding extra disk or RAM capacity.

- A change in a table’s consistent hashing algorithm configuration forces data (by definition) to another chain.

- Key Points

- Minimize the moving of data from one place to another.

- Support rate control features to minimize service impact.

- Ability to "test" and to "customize" key distribution before migration.

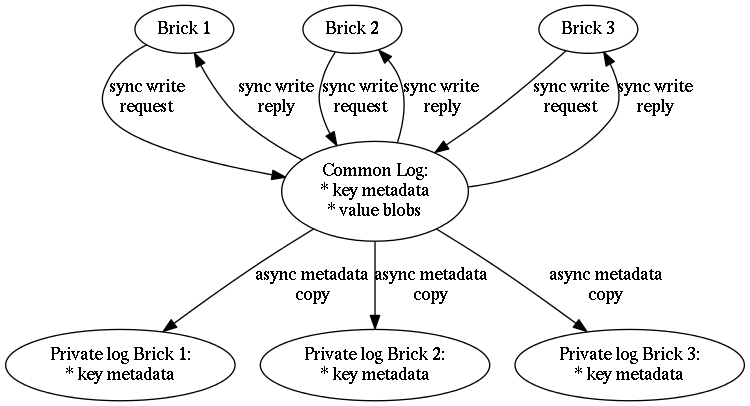

- The shared "common log" per server. Provides durability guarantees to all logical bricks within the server node via the fsync() system call.

- Individual "private logs" per brick. All metadata regarding keys in the logical brick are stored in the logical brick’s private log.

As a key-value store, Hibari’s core data model and client API model are simple by design:

blob-based key-value pairs

- keys: typically path-like names separated by "/'

- values: binary blobs (often serialized Erlang terms)

operations

- insertion (add, set, replace)

- deletion (delete)

- retrieval (get, get_many)

- lexicographically sorted tables

- key prefixes (often) used for implementing atomic "micro-transactions" with individual chains

Hibari supports multiple client API implementations:

- Native Erlang

- Universal Binary Format (UBF/EBF)

- Thrift

- Amazon S3

- JSON-RPC

You can develop Hibari client applications in a variety of languages including Java, C/C++, Python, Ruby, and Erlang.

Intermission #1

- 10 minute break

- Erlang Basics

- Native Client

- UBF Basics (extra)

- UBF Client (extra)

- Number

- There are two types of numeric literals, integers and floats.

- Atom

An atom is a literal, a constant with name. An atom should be enclosed in single quotes (') if it does not begin with a lower-case letter or if it contains other characters than alphanumeric characters, underscore (_), or @.

hello phone_number 'Monday' 'phone number' 'hello' 'phone_number'

- Bit String and Binary

A bit string is used to store an area of untyped memory. A bit string that consists of a number of bits that is evenly divisible by eight is called a binary.

<<10,20>> <<"ABC">>

- Term

- A piece of data of any data type is called a term.

- Tuple

A tuple is a compound data type with a fixed number of terms, enclosed by braces:

{Term1,...,TermN}- List

A list is a compound data type with a variable number of terms, enclosed by square brackets:

[Term1,...,TermN]

- String

- Strings are enclosed in double quotes ("), but are not a true data type in Erlang. Instead a string "hello" is shorthand for the list [$h,$e,$l,$l,$o], that is [104,101,108,108,111].

- Boolean

-

There is no Boolean data type in Erlang. Instead the atoms

trueandfalseare used to denote Boolean values. - None or Null

- There is no such type in Erlang. However, the atom undefined is often (by convention) used for this purpose.

- Pid

- A process identifier, pid, identifies an Erlang process.

- Reference

- A reference is a term which is unique in an Erlang runtime system.

- Fun

- A fun is a functional object.

… plus a few others

The file "math.erl" contains the following program:

-module(math). -export([fac/1]). fac(N) when N > 0 -> N * fac(N-1); fac(0) -> 1.

This program can be compiled and run using the Erlang shell.

$ erl

Erlang R14B01 (erts-5.8.2) [source] [64-bit] [smp:2:2] [rq:2] [async-threads:0] [hipe] [kernel-poll:false]

Eshell V5.8.2 (abort with ^G)

1> c(math).

{ok,math}

2> math:fac(25).

15511210043330985984000000Let it crash …

3> math:fac(-1). ** exception error: no function clause matching math:fac(-1)

- Append

append([], L) -> L; append([H | T], L) -> [H | append(T, L)].

- QuickSort

qsort([]) -> []; qsort([H | T]) -> qsort([ X || X <- T, X < H ]) ++ [H] ++ qsort([ X || X <- T, X >= H ]).- Adder

> Adder = fun(N) -> fun(X) -> X + N end end. #Fun<erl_eval.6.13229925> > G = Adder(10). #Fun<erl_eval.6.13229925> > G(5). 15

- brick_simple:add(Tab, Key, Value, ExpTime, Flags, Timeout)

- ⇒ ok.

- brick_simple:replace(Tab, Key, Value, ExpTime, Flags, Timeout)

- ⇒ ok.

- brick_simple:set(Tab, Key, Value, ExpTime, Flags, Timeout)

- ⇒ ok.

- brick_simple:get(Tab, Key, Flags, Timeout)

-

⇒

{'ok', timestamp(), val()}. - brick_simple:get_many(Tab, Key, MaxNum, Flags, Timeout)

-

⇒

{ok, {[{key(), timestamp(), val()}], boolean()}}. - brick_simple:delete(Tab, Key, Flags, Timeout)

- ⇒ ok.

- brick_simple:do(Tab, OpList, OpFlags, Timeout)

- ⇒ [OpRes()].

- Two flavors

- normal - OpList

-

micro-transaction - [

'txn'|OpList]

"single" operations are implemented (under the hood) using the do/4 function. |

Tab

- Name of the table.

Type:

-

Tab = table() -

table() = atom()

-

Key

- Key for the table, in association with a paired value.

Type:

-

Key = key() -

key() = iodata() -

iodata() = iolist() | binary() -

iolist() = [char() | binary() | iolist()]

-

|

Value

- Value to associate with the key.

Type:

-

Value = val() -

val() = iodata() -

iodata() = iolist() | binary() -

iolist() = [char() | binary() | iolist()]

-

ExpTime

- Time at which the key will expire, expressed as a Unix time_t().

- Optional; defaults to 0 (no expiration).

Type:

-

ExpTime = exp_time() -

exp_time() = time_t() -

time_t() = integer()

-

Flags

- List of operational flags and/or custom property flags to associate with the key-value pair in the database. Heavy use of custom property flags is discouraged due to RAM-based storage.

Type:

-

Flags = flags_list() -

flags_list() = [do_op_flag() | property()] -

do_op_flag() = {'testset', timestamp()} -

timestamp() = integer() -

property() = atom() | {term(), term()}

-

Operational flag usage

{'testset', timestamp()}-

Fail the operation if the existing key’s timestamp is not

exactly equal to

timestamp(). If used inside a micro-transaction, abort the transaction if the key’s timestamp is not exactly equal totimestamp().

-

Fail the operation if the existing key’s timestamp is not

exactly equal to

Timeout

- Operation timeout in milliseconds.

- Optional; defaults to 15000.

Type:

-

Timeout = timeout() -

timeout() = integer()

-

Error returns

'key_not_exist'- The operation failed because the key does not exist.

{'key_exists',timestamp()}- The operation failed because the key already exists.

-

timestamp() = integer()

{'ts_error', timestamp()}-

The operation failed because the

{'testset', timestamp()}flag was used and there was a timestamp mismatch. Thetimestamp()in the return is the current value of the existing key’s timestamp. -

timestamp() = integer()

-

The operation failed because the

'invalid_flag_present'-

The operation failed because an invalid

do_op_flag()was found in theFlagsargument.

-

The operation failed because an invalid

'brick_not_available'- The operation failed because the chain that is responsible for this key is currently length zero and therefore unavailable.

{{'nodedown',node()},{'gen_server','call',term()}}- The operation failed because the server brick handling the request has crashed or else a network partition has occurred between the client and server. The client should resend the query after a short delay, on the assumption that the Admin Server will have detected the failure and taken steps to repair the chain.

-

node() = atom()

- Exit by timeout.

Operational flag usage

'get_all_attribs'-

Return all attributes of the key. May be used in combination

with the

witnessflag.

-

Return all attributes of the key. May be used in combination

with the

'witness'- Do not return the value blob in the result. This flag will guarantee that the brick does not require disk access to satisfy this request.

Success returns

{'ok', timestamp(), val()}- Default behavior.

{'ok', timestamp()}-

Success return when the get uses

'witness'but not'get_all_attribs'.

-

Success return when the get uses

{'ok', timestamp(), proplist()}-

Success return when the get uses both

'witness'and'get_all_attribs'.

-

Success return when the get uses both

{'ok', timestamp(), val(), exp_time(), proplist()}-

Success return when the get uses

'get_all_attribs'but not'witness'.

-

Success return when the get uses

For proplists, |

Operational flag usage

'get_all_attribs'-

Return all attributes of each key. May be used in combination

with the

witnessflag.

-

Return all attributes of each key. May be used in combination

with the

'witness'- Do not return the value blobs in the result. This flag will guarantee that the brick does not require disk access to satisfy this request.

{'binary_prefix', binary()}-

Return only keys that have a binary prefix that is exactly

equal to

binary().

-

Return only keys that have a binary prefix that is exactly

equal to

{'max_bytes', integer()}-

Return only as many keys as the sum of the sizes of their

corresponding value blobs does not exceed

integer()bytes. If this flag is not explicity specified in a client request, the value defaults to 2GB.

-

Return only as many keys as the sum of the sizes of their

corresponding value blobs does not exceed

{'max_num', integer()}- Maxinum number of keys to return. Defaults to 10. Note: This flag is duplicative of the MaxNum argument in purpose.

Success returns

{ok, {[{key(), timestamp(), val()}], boolean()}}- Default behavior.

{ok, {[{key(), timestamp()}], boolean()}}-

Success return when the

get_manyuses'witness'but not'get_all_attribs'.

-

Success return when the

{ok, {[{key(), timestamp(), proplist()}], boolean()}}-

Success return when the

get_manyuses both'witness'and'get_all_attribs'.

-

Success return when the

{ok, {[{key(), timestamp(), val(), exp_time(), proplist()}], boolean()}}-

Success return when the

get_manyuses'get_all_attribs'but not'witness'.

-

Success return when the

|

For proplists, |

Error returns

{txn_fail, [{integer(), do1_res_fail()}]}-

Operation failed because transaction semantics were used in the

dorequest and one or more primitive operations within the transaction failed. Theinteger()identifies the failed primitive operation by its position within the request’sOpList. For example, a 2 indicates that the second primitive listed in the request’sOpListfailed. Note that this position identifier does not count thetxn()specifier at the start of theOpList.

-

Operation failed because transaction semantics were used in the

Create a directory

$ mkdir running-directory

untar Hibari tarball package - "hibari-X.Y.Z-DIST-ARCH-WORDSIZE.tgz"

$ tar -C running-directory -xvf hibari-X.Y.Z-DIST-ARCH-WORDSIZE.tgz

- X.Y.Z is the release version ⇒ "0.1.4"

- DIST is the release distribution ⇒ "fedora14"

- ARCH is the release architecture ⇒ "x86_64-unknown-linux-gnu"

- WORDSIZE is the release wordsize ⇒ "64"

Start Hibari:

$ running-directory/hibari/bin/hibari start

Bootstrap the system:

$ running-directory/hibari/bin/hibari-admin bootstrap ok

Stop Hibari (later when needed):

$ running-directory/hibari/bin/hibari stop

Confirm that you can open the "Hibari Web Administration" page:

$ firefox http://127.0.0.1:23080 &

Confirm that you can successfully ping the Hibari node:

$ running-directory/hibari/bin/hibari ping pong

A single-node Hibari system is hard-coded to listen on the localhost address 127.0.0.1. Consequently the Hibari node is reachable only from the node itself. |

Connect to Hibari using Erlang’s remote shell

$ running-directory/hibari/erts-5.8.2/bin/erl -name hogehoge@127.0.0.1 -setcookie hibari -kernel net_ticktime 20 -remsh hibari@127.0.0.1 Erlang R14B01 (erts-5.8.2) [source] [64-bit] [smp:2:2] [rq:2] [async-threads:0] [hipe] [kernel-poll:false] Eshell V5.8.2 (abort with ^G) (hibari@127.0.0.1)1>

Check your node name and the set of connected Erlang nodes.

(hibari@127.0.0.1)1> node(). 'hibari@127.0.0.1' (hibari@127.0.0.1)2> nodes(). ['hogehoge@127.0.0.1'] (hibari@127.0.0.1)3>

Hibari’s name, cookie, and kernel net_ticktime are configurable and located in the running-directory/hibari/etc/vm.args file. |

The "rlwrap -a running-directory/hibari/erts-5.8.2/bin/erl" tool is helpful for keeping track of your Erlang shell history (e.g. yum install rlwrap). |

Create a new table having a hash prefix of 2 and having 3 bricks per chain.

$ running-directory/hibari/bin/hibari-admin create-table tab2 \ -bigdata -disklogging -syncwrites \ -varprefix -varprefixsep 47 -varprefixnum 2 \ -bricksperchain 3 \ hibari@127.0.0.1 hibari@127.0.0.1 hibari@127.0.0.1For example, let’s assume the first part of a key represent’s a user’s id. A hash prefix of 2 makes the keys of each individual user to be stored on the same chain … but not necessarily on the same chain as other user’s keys.

: /user1/adir/ /user1/adir/file1 /user1/adir/file3 /user1/file1 /user1/file4 /user1/xdir/ /user1/xdir/fileY : /user2/file1 : /user3/file4 :

Tables can also be created using Hibari’s Admin Server

Webpage. Open |

The goal of these exercises is to learn more about Hibari and to implement and to test your own Hibari mini-applications using Hibari’s Native Erlang Client.

- Install Hibari

- Start and Bootstrap Hibari

Wait 15 seconds (or so) and then make a backup of Hibari’s Schema.local file and data files:

$ tar -cvzf backup.tgz running-directory/hibari/Schema.local running-directory/hibari/data/brick

- Verify the status of Hibari

- Connect to Hibari using the Erlang shell

- Create tab2 as described above

- Open Hibari’s Admin Server Webpage

- Make a list of what is the same and what is different between tab1 and tab2.

- What other things can be learned from the Admin Server Webpage?

- Stop Hibari

Delete Hibari’s Schema.local and data files:

$ rm -r running-directory/hibari/Schema.local running-directory/hibari/data/brick/*

Restore Hibari’s Schema.local and data files:

$ tar -xvzf backup.tgz

- Start Hibari

- Verify the status of Hibari

- Open Hibari’s Admin Server Webpage

- What tables exist now?

- Create tab2 (again) as described above.

- Stop and Start Hibari

- Follow the status of the Chains and Bricks

- What’s happening?

- What about the history of each Chain and/or Brick?

Using the Erlang Shell, repeat the examples listed in Hibari’s Application Developer Guide.

What changes can be seen on Hibari’s Admin Server Webpages during and after doing these example exercises?

Implement a new API for Hibari but doing so on the client (and not server) side.

- rename(Tab, OldKey, Key, ExpTime, Flags, Timeout)

⇒

'ok'|'key_not_exist'|{'ts_error', timestamp()}|{'key_exists',timestamp()}-

This function renames an existing value corresponding to OldKey to

new Key and deletes the OldKey. Flags of the OldKey are ignored and

replaced with the Flags argument (except for

'testset'flag). -

If OldKey doesn’t exist, return

'key_not_exist'. -

If OldKey exists, Flags contains

{'testset', timestamp()}, and there is a timestamp mismatch with the OldKey, return{'ts_error', timestamp()}. -

If Key exists, return

{'key_exists',timestamp()}.

-

This function renames an existing value corresponding to OldKey to

new Key and deletes the OldKey. Flags of the OldKey are ignored and

replaced with the Flags argument (except for

- For your first implementation, don’t worry about a transactional do/4 operation.

-

The Erlang/OTP

proplists:delete/2andproplists:get_value/3can be used for Flags filtering. -

The

{'testset', timestamp()}flag is your friend. - If you are feeling adventurous, try implementing with a transactional do/4 operation. What restrictions must then be placed on OldKey and Key?

Mnesia is a distributed Database Management System distributed with Erlang/OTP. Mnesia supports a dirty_update_counter/3 operation. Implement a similiar API for Hibari but doing so on the client (and not server) side.

- update_counter(Tab, Key, Incr, Timeout)

⇒

{'ok', NewVal}|'invalid_arg_present'|{non_integer,timestamp()}| exit by Timeout.- This function updates a counter with a positive or negative integer. However, counters can never become less than zero.

-

If Incr is not an integer, return

'invalid_arg_present'. -

If two (or more) callers perform update_counter/3 simultaneously,

both updates will take effect without the risk of losing one of the

updates. The new value

{'ok', NewVal}of the counter is returned. - If Key doesn’t exist, a new counter is created with the value Incr if it is larger than 0, otherwise it is set to 0.

- If Key exists but it’s value is not an integer greater than or equal to zero, return {non_integer, timestamp()}.

- If updating of the counter exceeds the specified timeout, exit by Timeout.

-

The

{'testset', timestamp()}flag is your friend. -

Use

if is_integer(X) -> ...; true -> ... end.to check if a term is an integer or not. -

The erlang primitives

erlang:integer_to_binary/1anderlang:binary_to_integer/1are very helpful (and necessary). -

The erlang primitive

erlang:now/0andtimer:now_diff/2can be used to create an absolute now time and to compare with a new abosolute now time, respectively. Timeout is in milliseconds. Now is in microseconds.

Implement a filesystem-like Client API using Hibari’s Key-Value Data Model.

- mkdir(Tab, Path, Timeout)

-

⇒

'ok'|{'dir_exists', timestamp()}. - listdir(Tab, Path, Timeout)

-

⇒

{'ok', Names}|'dir_not_exist'. - rmdir(Tab, Path, Timeout)

-

⇒

'ok'|'dir_not_exist'|'dir_not_empty'. - create(Tab, Path, Timeout)

-

⇒

'ok'|'dir_not_exist'|{'file_exists', timestamp()}. - rmfile(Tab, Path, Timeout)

-

⇒

'ok'|'file_not_exist'. - writefile(Tab, Path, Data, Timeout)

-

⇒

'ok'|'file_not_exist'. - readfile(Tab, Path, Timeout)

-

⇒

{'ok', Data}|'file_not_exist'.

- How can you represent a file?

- How can you represent a directory?

- How can you find files within a certain directory? … below a directory tree?

- How can you efficiently check if a directory is empty or not?

- How can test-n-set of timestamps be used?

Please check Hibari’s GitHub repositories and webpages for updates.

|

Hibari Open Source project | |

|

Hibari Twitter |

@hibaridb Hashtag: #hibaridb |

|

Gemini Twitter |

@geminimobile |

|

Big Data blog | |

|

Slideshare |